ArangoDB vs. CouchDB Benchmarking | ArangoDB 2012

A side-effect of measuring the impact of different journal sizes was that we generated some performance test results for CouchDB, too. They weren’t included in the previous post because it was about journal sizes in ArangoDB, but now we think it’s time to share them.

Test setup

The test setup and server specification is the one described in the previous post. In fact, this is the same test but now also including data for CouchDB.

Here’s a recap of what the test does: the test measures the total time to insert, delete, update, and get individual documents via the databases’ HTTP document APIs, with varying concurrency levels. Time was measured from the client perspective. We used a modified version of httpress as the client. The client and the databases were running on the same physical machine. CouchDB was shut down while ArangoDB was running, and vice-versa.

waitForSync was turned off in ArangoDB, and delayed_commits was turned on in CouchDB, so both databases did not sync data to disk after each operation. Compression was not used in CouchDB, neither was compaction.

The documents used for the two databases were almost but not fully identical due to the restrictions the databases imposed. Here are the differences:

- document ids (“_id”): ArangoDB uses numerical ids and CouchDB uses string ids. Still, these same id literal values were used.

- revision ids (“_rev”): CouchDB generates _rev values itself with unique string values whereas ArangoDB either auto-generates them with a unique integer value or allows the client to supply an own integer value

As mentioned before, the tests were originally run to show the impact of varying journal sizes in ArangoDB, and how this would compare to CouchDB. For this reason, the results contain the following series for each sub-operation:

- arangod32: ArangoDB 1.1-alpha with 32 MB journal size

- arangod16: ArangoDB 1.1-alpha with 16 MB journal size

- arangod8: ArangoDB 1.1-alpha with 8 MB journal size

- arangod4: ArangoDB 1.1-alpha with 4 MB journal size

- couchdb: CouchDB 1.2

Performance, 10,000 operations

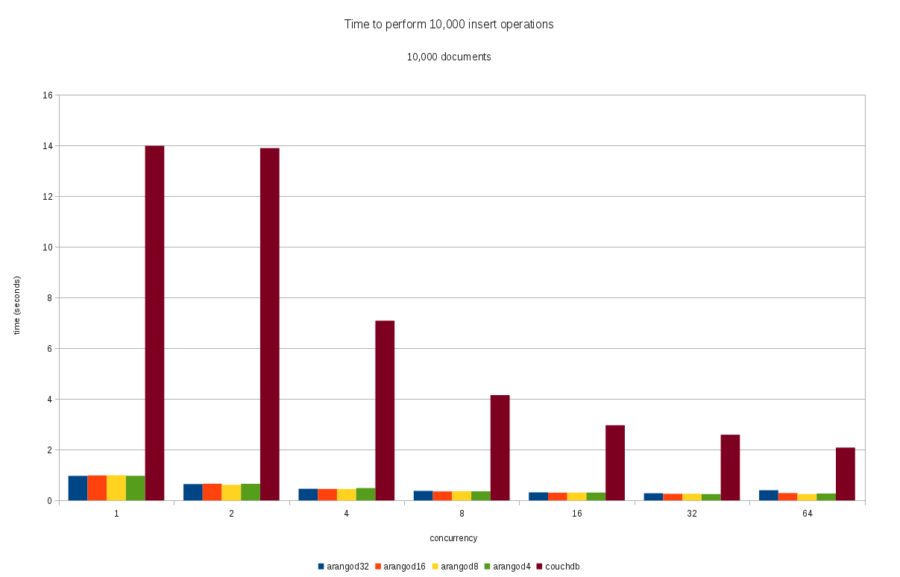

Following are the results that show the total time it took to execute 10,000 individual HTTP document insert operations via 10,000 individual HTTP API calls, with varying concurrency levels.

Insert performance

Delete performance

The results for 10,000 individual delete operations were (results only containing delete time, not insert time):

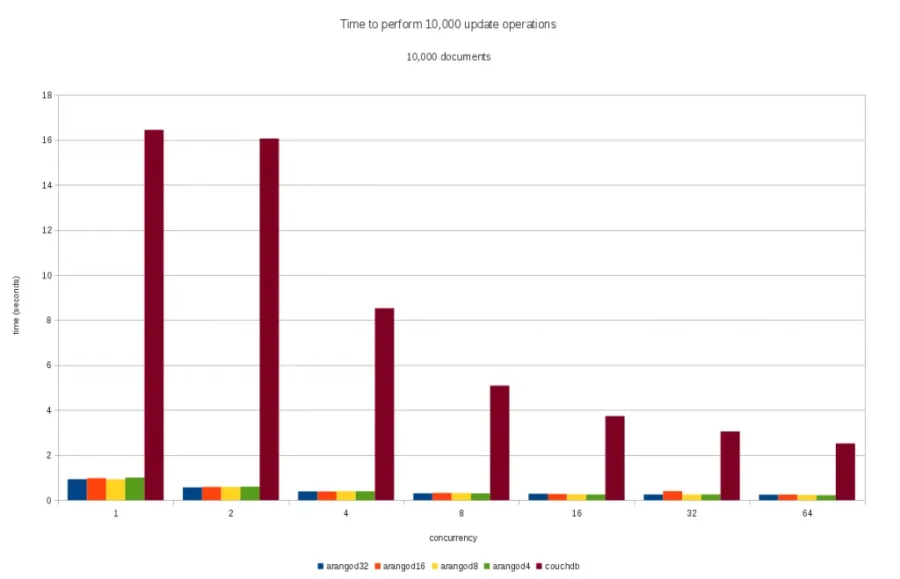

Update performance

The results of updating 10,000 previously inserted documents were (results only containing update time, not insert time):

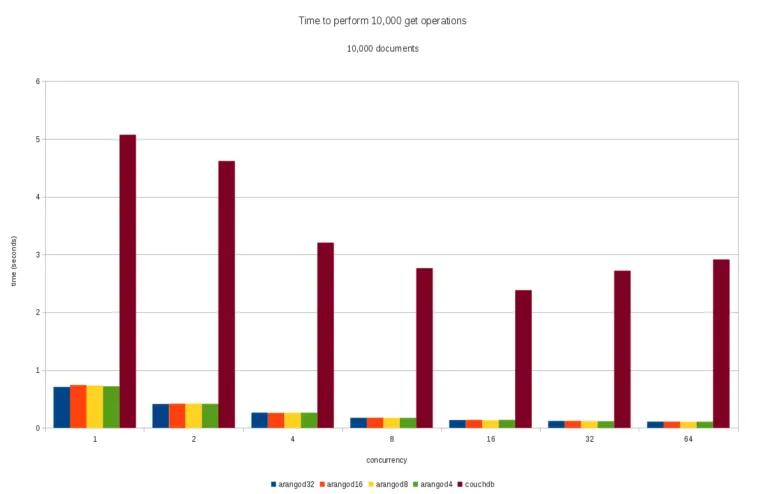

Get performance

To retrieve 10,000 documents individually, the following times were measured (results only containing total time of get operations, not insert time):

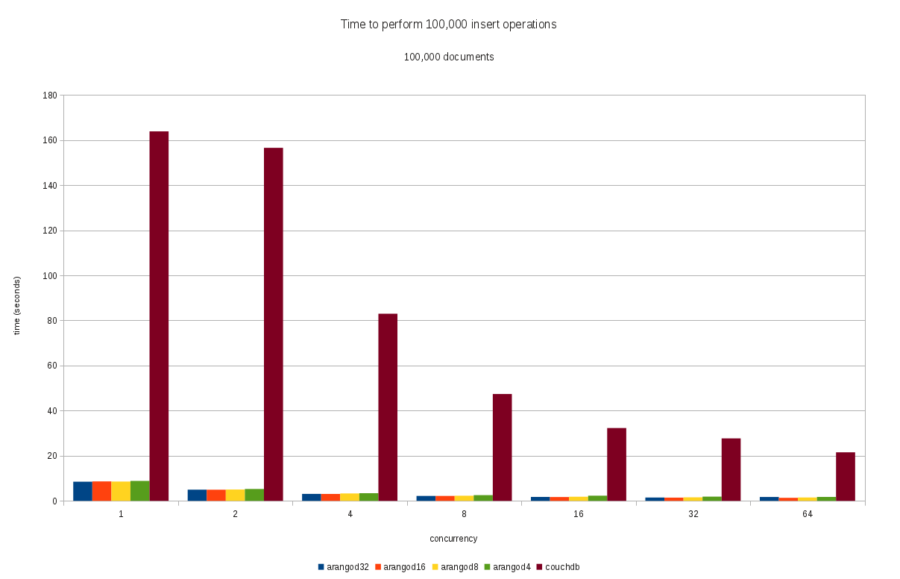

Performance, 100,000 operations

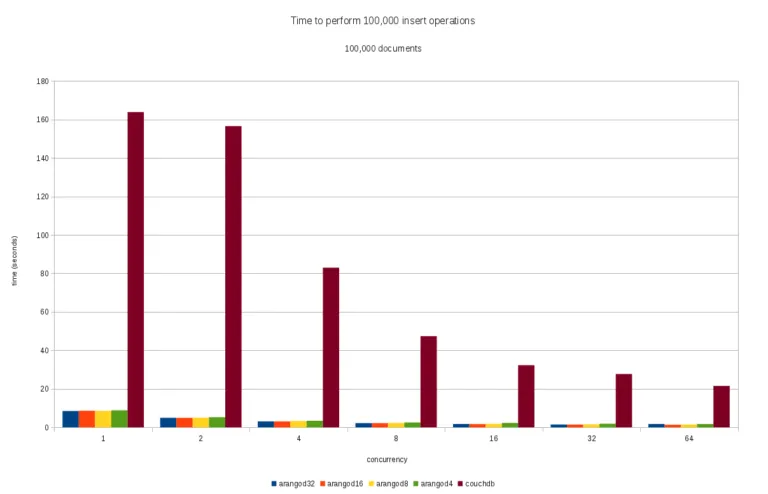

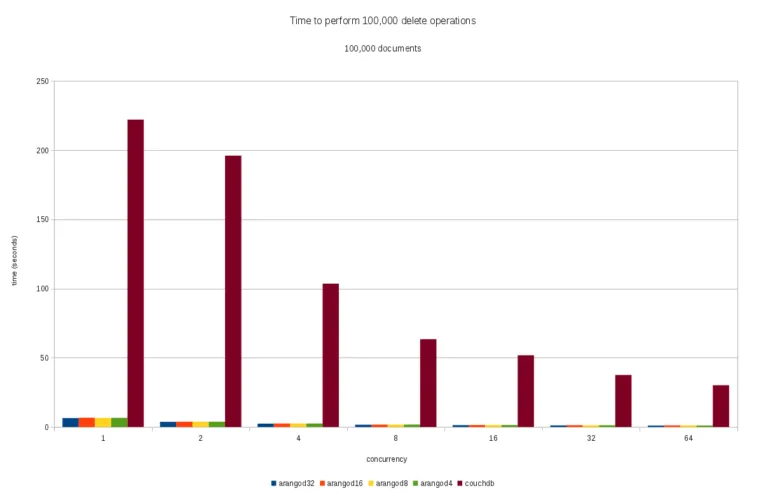

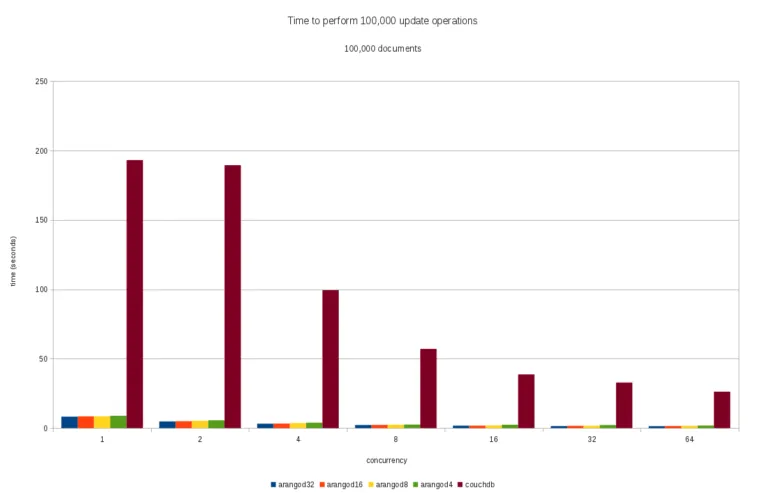

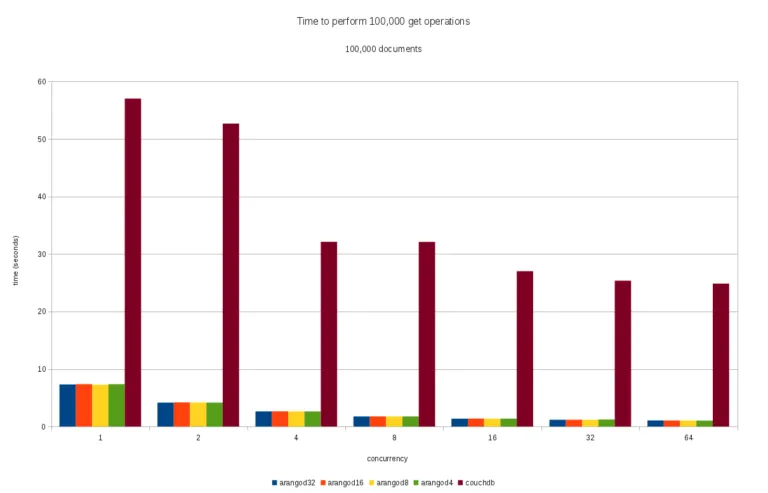

Following are the results for the same test cases as before (insert, delete, update, get operations) but now with 100,000 instead of 10,000 documents.

Insert performance

Delete performance

Update performance

Get performance

Conclusion

ArangoDB performs quite well in this benchmark when compared to CouchDB. Total times needed to insert, delete, update, and get documents were all much lower for ArangoDB than they were for CouchDB. It was surprising to see that this was the case for both with and without concurrency.

Caveats (a.k.a. “LOL benchmarks”)

Though the results shown above all point into one direction, keep in mind that this is just a benchmark and that you should never trust any benchmark without questioning.

First, you should be aware that this is a benchmark for a very specific workload. That workload might or might not be realistic for you. For example, not many (though I’ve seen this) would insert 100,000 documents with 100,000 individual HTTP API calls if the application allows the documents to be inserted in batches. Both ArangoDB and CouchDB offer a bulk import API for exactly this purpose. However, in case many different clients are connected and each client does a few document operations, then the tested workload scenario might be well realistic.

Second, the type of documents used in these tests might have favored ArangoDB, because all documents had identical attribute names and types (but not ids and values). ArangoDB can re-use an identical document structure (these are called “shapes” in ArangoDB lingo) for multiple documents and does not need to save structures redundantly. CouchDB doesn’t reuse document structures.

Third, CouchDB also offers a lot of features that ArangoDB doesn’t have (or not yet have) and that might potentially lead to some performance penalty that favored ArangoDB unfairly.

Fourth, there might also be some magic settings for CouchDB that substantially affect the read and write performance that we simply haven’t found yet. If someone could suggest any apart from delayed_commits and disabling compaction, we’d be happy to try again with modified settings.

As always, please conduct your own tests with your specific data and workload to see what results you will get.

3 Comments

Leave a Comment

Get the latest tutorials, blog posts and news:

I miss a cross action Benchmark, get, insert, update and delete at the same time.

Just one action at a moment is not a good test case, it should something like: I G I U G I D in a 100.000 loop. The Update and delete should target different random entries and not the last inserted.

There’s so many things that could be tested, but unfortunately not so much time to do it.

But yes, doing a cross-action benchmark would be nice and hopefully we’ll find the time to do it at some later point. If so, I’d suggest not using random entries because it would introduce some non-deterministic behavior (which might or might not matter for the results).

Maybe one could use a simple pseudo-random number generator like “x * 13 + 5”?