Dynamic Script Execution Performance in ArangoDB | ArangoDB 2012

In the previous post we published some performance results for ArangoDB’s HTTP and networking layer in comparison to that of some popular web servers. We did that benchmark to assess the general performance (and overhead) of the network and HTTP layer in ArangoDB.

Using ArangoDB as an application server

While HTTP is a good and (relatively) portable mechanism of shipping data between clients and servers, it is only a transport protocol. People will likely be using ArangoDB not only because it supports HTTP, but primarily because it is a database and an application server.

In this post, we’ll be checking what’s happening when ArangoDB is used as an application server. Using ArangoDB as such requires it to run user-defined actions. Currently, ArangoDB allows scripting in

- Javascript, executed on the server using Google V8

- Ruby, executed on the server using MRuby

It should be noted that MRuby is still early in its development and not officially marked as “stable”. It already works well for many cases, but will not be covered in this post.

The benchmark

We were interested in finding out how much throughput (i.e. requests per second) we would get when executing dynamic scripts in ArangoDB. As mentioned before, we measured the Javascript variant that uses Google V8.

To measure the throughput, we set up simple benchmarks that called the same Javascript actions in ArangoDB repeatedly. The actions were triggered via HTTP calls.

We also tested executing the same actions in node.js (again triggered via HTTP calls) to get some reference figures. node.js is quite an interesting reference because it also uses Google’s V8 engine for script execution as ArangoDB does. The versions used were:

- ArangoDB-1.0-alpha3

- node.js v0.8.1

node.js was used in standard mode (i.e. single-thread) and using the cluster module, with 8 children spawned.

Scripts tested

We tested two scripts in both ArangoDB and node.js:

- “static” variant: a script that responds to each request with a static response (67 bytes). The script itself does not use much CPU or other resources. A great part of the total time is spent in network I/O and data conversion, not in the script itself. Real-world user actions probably won’t be as simple as this, but we used this variant to estimate the maximum possible throughput when using dynamic scripts.

- “factorial” variant: this script calculates the sum of the factorials from 1 to 100. The factorial function was a recursive one. This script already does a fair amount of work and the I/O and data conversion share is lower.

In all cases tested, the servers would accept the HTTP request, pass the request data to the Javascript handler, execute the script, and return the response to the client.

Test setup

What was being measured was the total time it took a test client to send 100.000 (100K) identical HTTP GET requests to the server(s), execute the script action and get the servers’ responses back. The total time it took the servers to answer all requests was then also translated into the “requests per second”.

The tests were run with the number of concurrent client connections being increased from 1 to 512. For each concurrency level, 3 test runs were conducted and the average results of the 3 runs were used as the overall result for that concurrency level.

Test scenarios

Two different test scenarios have been used:

- In one scenario (“local” scenario), the client was located on the same physical host as the server.

- In the other scenario (“network” scenario), the client was located on a different physical host and the requests went over the network. Client and server were located in the same network and using the same switch.

In the “local” scenario the client and the server parts were running on the same physical host. The communication between client and server was HTTP over TCP/IP in all cases. HTTPS/SSL has not been tested. The HTTP request was sent with “Connection: Keep-Alive” header in all cases.

The test client used for all tests was ApacheBench 2.3. The command used for the tests was:

./ab -k -c $CONCURRENCY -n 100000 $URL

Server specs

ArangoDB and node.js were installed on the same physical server. To get comparable results, everything was compiled from source on the server. Compilation was done with gcc/g++ 4.5.1 and -O2 optimisation level consistently.

The server had the following hardware and configuration:

- Linux Kernel 2.6.37.6-0.11, cfq scheduler

- 8x Intel(R) Core(TM) i7 CPU, 2.67 GHz

- 12 GB total RAM

- 1000Mb/s, full-duplex network connection

- SATA II hard drive (7.200 RPM, 32 MB cache)

All logging facilities have been turned off. ArangoDB and node.js were run with the same normal-privileged user account so the operating system imposed the same limits on them. CPU stepping and recurring jobs (e.g. cron) were turned off during the tests.

By the way, we used the same test environment and scenarios as described in the previous post. Please have a look at that article for more details. Please be sure to also read the caveats of that article carefully.

Test results

All result details can be found in this file: Performance test results, dynamic code execution

“Local” scenario

We’ll first be looking at what’s happening when client and server were located on the same physical host.

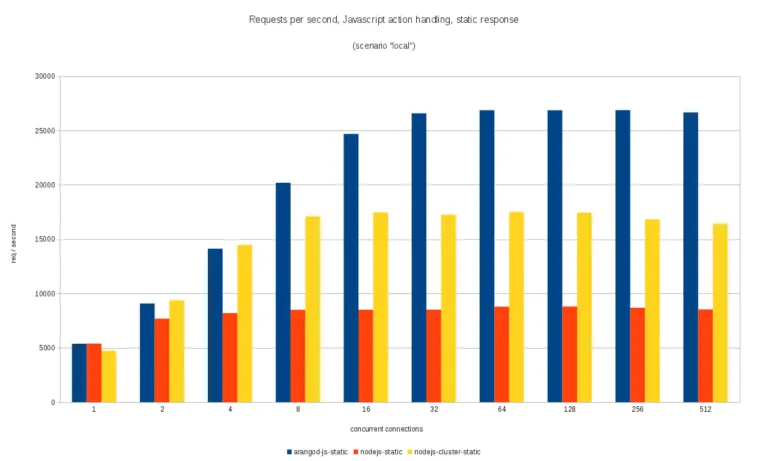

When executing the “static” variant script, ArangoDB and node.js in single-threaded mode were on par (i.e. about the same throughput) when the requests came in sequentially (that is, concurrency level 1). When parallelism was increased, the throughput in ArangoDB increased up to including 32 concurrent connections and then stagnated. Maximum throughput was about fivefold the initial value.

When using node.js in single-threaded mode, the throughput did not increase much when concurrency was increased. The maximum throughput measured with node.js was about 1.6x the initial value. This was expected as standard node.js is single-threaded by design. Using node.js with the cluster module increased throughput to up to 8 concurrent connections and then stagnated. Maximum throughput was significantly lower than in ArangoDB.

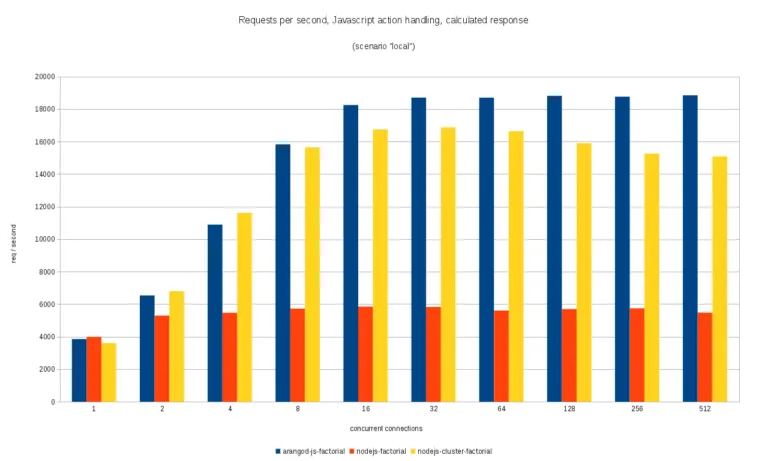

The results of the “factorial” variant had about the same relative distributions as the “static” variant, just the absolute values were lower because the servers needed to work harder:

“Network” scenario

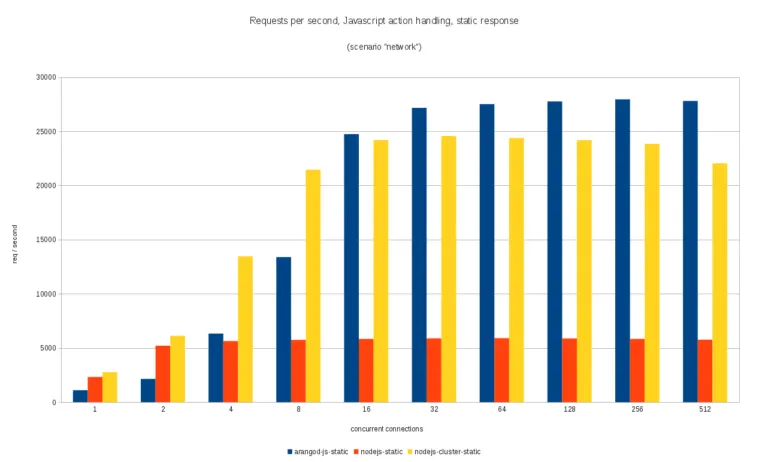

In the “network” scenario, throughput was rather low for the “static” variant in ArangoDB and sequential execution. However, throughput increased up to including 32 concurrent connections and then reached some plateau. Throughput increases were drastic between 2 and 16 concurrent connections. In total, throughput increase was more than tenfold, even though the initial value was low.

Throughput achieved with node.js in single-threaded mode was substantially higher both without concurrency and at concurrency level 2, but mostly stagnated after that. This again was due to the single-threaded node.js setup. Using node.js in cluster mode allowed increasing throughput up to until 32 concurrent connections before it slightly declined. The maximum throughput achieved with the node.js cluster was a little lower than the one achieved with ArangoDB.

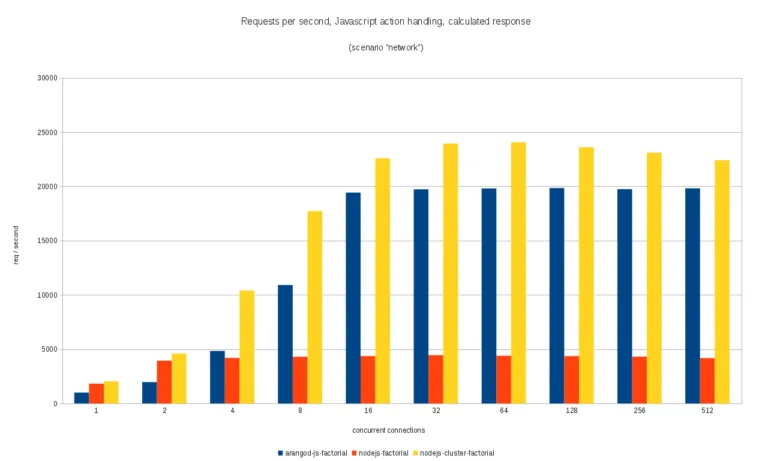

For the “factorial” variant, the relative results were about the same as for the “static” variant for up to including 8 concurrent connections, just with the absolute values reduced. After that, ArangoDB reached its throughput plateau and the node.js cluster outperformed it slightly. node.js cluster reached the throughput plateau at 32 concurrent connections.

Conclusions

We observed in this benchmark that when client and server are on the same physical host, you can get about the same throughput rates for sequential execution of dynamic scripts in ArangoDB and node.js.

When requests come in in parallel, ArangoDB will also start executing scripts in parallel. This is because ArangoDB has a multi-threaded architecture that will dispatch script execution to worker threads. Throughput can be increased up to some concurrency level and then will eventually reach a plateau. Standard node.js will fall behind due to its single-threaded design. The situation changes when the node.js cluster is used. Using the cluster showed almost the same throughput as ArangoDB was able to achieve.

When client and server are located on different hosts, node.js in single-threaded and cluster mode showed better throughput than ArangoDB at no and low concurrency. We need to investigate why and see if we can get at this level as well. For the “static” scenario, ArangoDB throughput was slightly higher than the cluster’s at higher concurrency levels. In contrast, the throughput achieved with node.js cluster was higher than ArangoDB’s throughput even for higher concurrency in the “factorial” scenario. Again, we need to find out why there was a plateau at around 20K requests/second.

During this benchmark we observed a few things:

- conversion between native C/C++ values and V8 objects is quite expensive, especially for strings that need to be converted from ASCII/UTF-8 to UTF-16. This overhead matters much at least for the “static” variant that does very little work in the actual script. It will be interesting to see, how MRuby performs in this setup, as it uses UTF-8 – so no conversion is necessary.

- modifying the V8 garbage collection interval for ArangoDB (this is a startup parameter) did change the results marginally but not to a great extent.

Get the latest tutorials, blog posts and news: