Discover ArangoDB 3.0: New Cluster Features

The 3.0 release of ArangoDB will introduce a completely overhauled cluster and marks a major milestone on its road to “zero-maintenance” where you can keep focus on your product instead of your datacenter.

Synchronous replication

Earlier releases of ArangoDB already featured asynchronous replication. This was already a great method to do backups and allowed for failover in case of a disaster. However that was mostly a manual job and furthermore – due to its asynchronous nature – data loss could happen.

With the release of ArangoDB 3.0 the cluster will feature synchronous replication. When creating collections you can now specify a replication factor. The cluster will then figure out a proper leader and some followers from its dbserver pool. After creating such a replicated collection writes to that collection will then be synchronously distributed across your cluster.

From that moment on your collection is accsessible in a fail-safe manner (unless a serious bomb is taking your datacenter away). Should the leader of the collection bail out for whatever reason the cluster will automatically find a solution and failover to a follower without any data loss.

Asynchronous replication

Why would you need asynchronous replication then anyway?

One word: Backups!

Disorders during operation will cause the cluster to reorganize itself (which might result in a short timed slower performance). However asynchronous replicas (we call them Secondaries) are simple, silent followers. Should there be any disorders with any secondary server the cluster will not care at all. If the secondary is coming back at some point it will slowly catch up.

This makes them perfectly suitable for doing backups (apart from bombs there might also be floods, fire etc.):

Start a secondary, let it catch up, shut it down, backup, repeat.

Grow your cluster as your data grows

With ArangoDB 3.0 scaling your cluster is dead simple. Start a new primary dbserver for example and it will be included into your cluster straight away and used for new data shards out of the box. This is sufficient for scaling slowly but surely but of course you want to be ready when the moment comes and your product suddenly supercharges and you are facing 20 times the traffic and load. In that case you need more computing power on the existing shards. 3.0 introduces zero downtime shard rebalancing. Add a few servers, rebalance and watch the load go away.

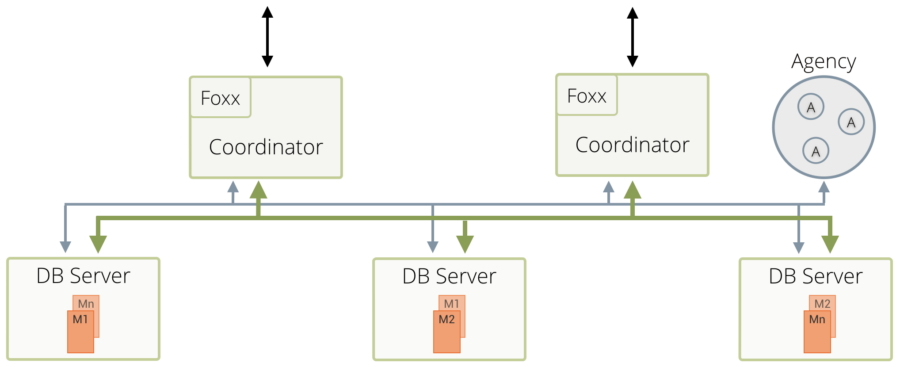

cluster topology

Cluster setup greatly simplified

The cluster setup in ArangoDB 3.0 has been greatly simplified. We were getting a lot of questions regarding how to setup a cluster in different environments and with earlier releases it was very hard to deploy a cluster in a different environment than on our supported cluster environments. You should now finally be able to set it up on whatever platform you like as it is really dead simple.

The missing puzzle piece: DC/OS

Nevertheless we continue to pursue a perfectly smooth DC/OS experience and our integration continues to be the model of a good cluster integration. When launched on a DC/OS cluster ArangoDB will automatically offer up and downscaling with just a click.

Furthermore DC/OS will handle task supervision and our integration will handle automatic failover, task rescheduling and so forth so you don’t have to care about your datacenter.

Striving for zero-maintenance

To sum it up the revamped cluster mode offers a near-zero-maintenance operation while still promising cutting edge scaling capabilities. A simple failure scenario:

1. A DBServer dies

2. ArangoDB’s internal cluster management will quickly discover it and fail over to the followers of all synchronously replicated

3. DC/OS will discover that the cluster setup is missing a DBServer

4. DC/OS will reschedule the start of a new DBServer

5. The new DBServer will announce itself to the ArangoDB cluster and integrate itself

No user interaction whatsoever was necessary and the cluster was accessible and useable at any time. Only logfiles will reveal that anything happended at all.

ArangoDB’s cluster mode strives to be much more than a simple master-master replication and offers a self-organizing and self-healing cluster management. A true happy-place for developers and operators alike.

2 Comments

Leave a Comment

Get the latest tutorials, blog posts and news:

[…] architecture with auto-failover just made deployment and maintenance of clusters very easy. Read more about our cluster improvements […]

[…] the full article, click here. @weinberger: “ArangoDB new Cluster features. The missing puzzle piece: DC/OS #dcos #mesos […]